Deep learning for document understanding - part 1

Deep learning for document understanding - part 1

Deep learning for document understanding - part 1

Document understanding using deep learning techniques

part 1

Document processing

Automatic data extraction from documents is not a new issue, manual document processing is a major cost driver in organizations. Meaningful results are achieved in text recognition, using a variety of methods in artificial intelligence. The traditional way to digitize documents is optical character recognition, which recognizes text and is a proven and workable approach. The OCR though does not retain the formatting elements of the template and fails at recognizing symbols with sufficient precision due to the presence of tables and other elements.

In modern versions of optical recognition, neural networks are also used with radical improvements in performance. At the same time, however, the problem with text formatted in a specific way is substantial and not entirely resolved. When tables or other graphic elements are used, recognition libraries not only can't retain formatting, but the presence of such elements can dramatically lower results. Even when the format may be given, just small deviations could be challenging.

Automatic recognition of the used template is a big step towards fully understanding the document. The deep learning techniques allow document automatic processing independently of quality, scale, orientation and format.

Document understanding

At IBS AI Lab we've successfully applied deep learning object detection concepts to document processing. The results of the applied techniques, identifying structure and document’s content, based on object recognition with convolutional neural networks (CNN) combined with optical character recognition (OCR), are promising.

The data conceptualization following the ontological engineering principles of data presentation is an important phase, determining meaning and semantics fundaments. Based on the conceptualization phase we label the document accordingly as determine the concept’s area at the document image. One important next step is to define the template as the relation between concepts within the documents, referring to their relative position. Our approach proposes to recognize the template not as pixel-wise classification bit rather described as relations between concepts within the documents referring to their relative position.

Using different image processing techniques our approach applies deep learning algorithms combined with an explicit definition of a special relation between concepts highly effective solve the issue of layout recognition despite the different orientation or lack of good quality! We have tried several architectures of neural networks, one of the results could be an example with the impressive achievement of end-to-end accuracy over 96% with near-real processing time.

Documents are a rich source of information. A knowledge base could be implemented, describing existing and inferred concepts and would be greatly beneficial in the semantic interpretation.

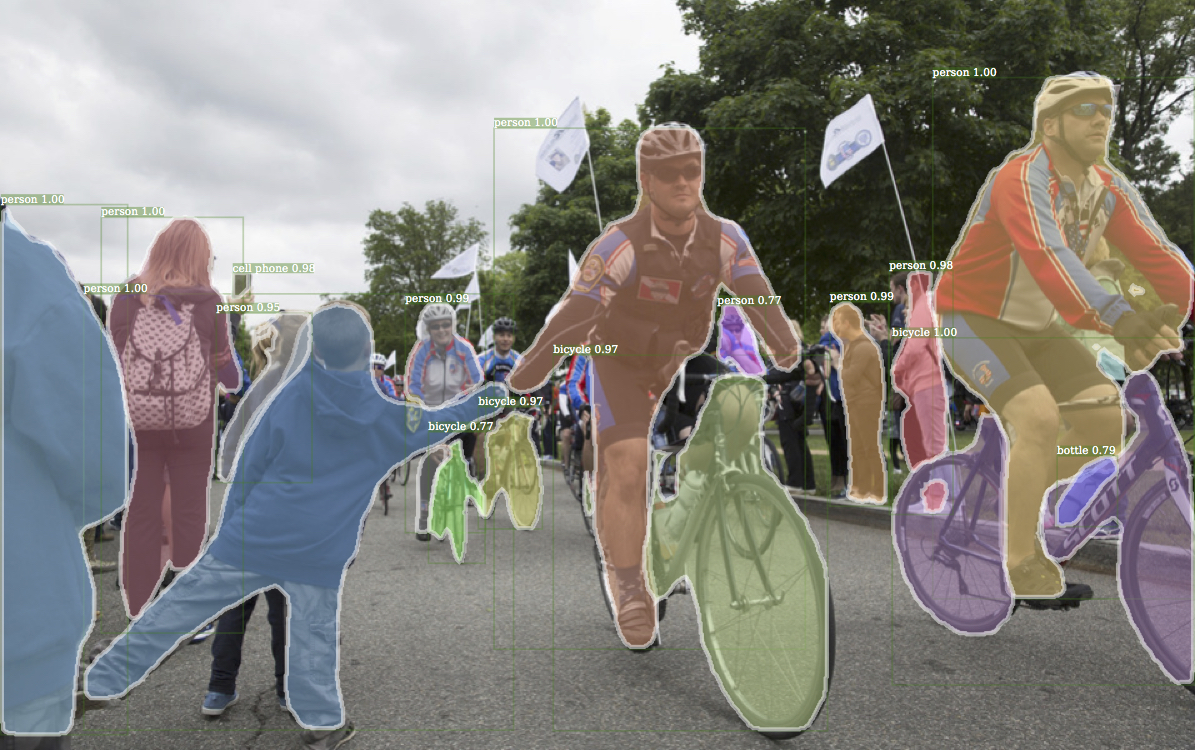

Object Detection

Convolutional neural network classifies the objects and finds the location within the document. We determine where the regions of a concepts interest are and the elements located within the regions. Labelling according to the required semantics may be different. We have chosen to mark regions, following concepts, such as a person, experts, purpose and context of the document. The recognized regions are representing the concepts within the document, a data structure comprising different attributes.

Along with our attempts, we encounter quite a few challenges, some of which are fonts and scale, quality, number of recognized objects. We've conducted various conceptual image area segmentation used for recognition. One of the main challenges encountered is the recognition of the small symbols. One hypothetical approach could require many OCR calls, which would be very costly in terms of performance, at the same time great advantage due to expected OCR’s excellent accuracy. Optical character recognition is trained for a sufficient number of fonts covering most of the used ones. Another interesting obstacle is the learning algorithm limitation in the number of recognized objects.

Our approach is very well-balanced between time and accuracy and implements the best compromise of an area of interests and concepts.

In the experiments we have used the IBM Power AI Vision (now IBM Vision Insights), a software which uses and implements the most modern computer vision convolutional neural network (CNN) architectures. We have conducted experiments with Detectron (FAIR):

Detectron

Software system Facebook AI Research implements state-of-the-art algorithms for detecting objects, including Mask R-CNN

|

The purpose of Detectron is to provide a high-quality, high-performance base for researching an object. It is designed to be flexible to support the rapid implementation and evaluation of new research. Detectron includes implementations of various algorithms for detecting objects like Mask R-CNN. Efficiently detects objects in an image while simultaneously generating a high-quality segmentation mask for each instance. The method, called Mask R-CNN, extends Faster R-CNN by adding a branch for predicting an object mask in parallel with the existing branch for bounding box recognition [“Mask R-CNN” K. He G. Gkioxari P. Dollar R. Girshick ´ Facebook AI Research (FAIR)]

IBM Power AI Vision is a software for computer vision and deep learning implementing state-of-the-art CNN architectures. The most efficiently document processing is achieved with Mask-RCNN. It can use objects labelled with polygons for greater training accuracy. Labelling with polygons is especially useful for small objects, objects that are at a diagonal, and objects with irregular shapes. However, training a data set that uses polygon labels takes longer than training with rectangular bounding boxes. If you want to use a Detectron model but want a shorter training time, you can disable segmentation and PowerAI Vision will use rectangles instead of polygons. The actual images are not modified, so you can train with segmentation later. The achieved Detectron with segmentation performance metrics: 96% accuracy, precision which shows true positive classification 100%, recall shows false negative at 98%, intersection over union 97% the intersection of grounding truth and predictive boxes.

Summary

The documents can be successfully automatically processed and understood using deep learning object localization and recognition. The holistic understanding of the document includes different techniques like deep learning and knowledge representation, allowing localization, recognition and inferred knowledge to combined in a productive end-to-end solution. The highly effective approach is applicable for large document types.

About the author

Kristina Arnaoudova, PhD, AI Lab manager at IBS Bulgaria

Kristina is an IT professional with 15+ years of experience in customer & business relationship management, IT service management and software development, specialized in financial services. Nowadays, Kristina is deeply into AI and Data Science research and development as a leader at IBS AI Lab and lecturer for AI at Sofia University.